Your team spent months planning. You picked a cloud platform, hired the right vendor, and put together a business case that finance approved. Then, six months after go-live, the data lake sits mostly unused. Analysts still pull reports from the old ERP. Pipelines break on weekends. The data engineering team spends more time on incidents than new work.

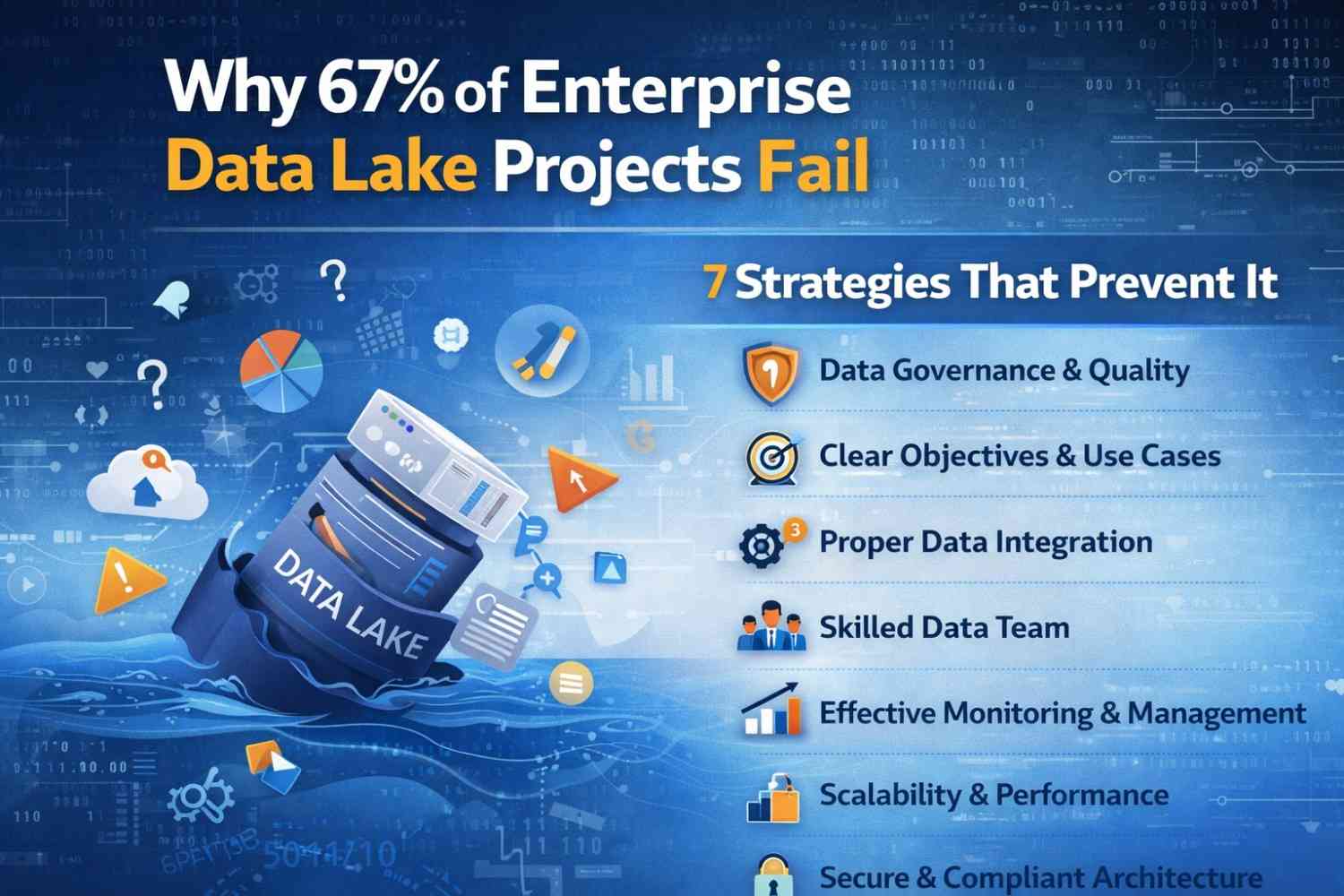

This is not a rare situation. Gartner puts the failure rate for enterprise data lake projects at 60 to 70 percent. Other industry surveys including Enterprise Data World’s 2025 report land in the same range. The number is high, and the reasons are consistent.

These projects do not fail because of bad technology. They fail because of decisions made before the first ingestion job runs. At Hexaview Technologies, we have worked with enterprise teams across the US on data lake builds, rescues, and redesigns. We see the same six failure patterns on repeat. This post names them and gives you seven strategies that actually prevent them.

What Does a Failed Data Lake Actually Look Like?

The word ‘failure’ covers a wide range of outcomes. Some projects miss budget by 3x. Some deliver on time but never reach adoption. Some get used briefly and then abandoned when the data turns out to be unreliable.

In enterprise data engineering, we define a failed data lake as one that does not reach its target adoption level within the planned timeline. That means the people who were supposed to use it, your analysts, data scientists, operations teams are not using it, or tried and stopped.

A 2025 survey from Enterprise Data World found that 58% of US companies with active data lake initiatives reported less than half of their target users accessed the lake on a monthly basis. That matches what we see on the ground.

| 📊 Real-World Context

A US-based insurance carrier engaged Hexaview Technologies in early 2025 after spending 18 months and $4.2M on an Azure Data Lake build. The core problem: engineers built the system for themselves, not for the actuarial teams who needed it. Adoption sat at 12%. After restructuring the data access layer and governance model over six months, adoption rose to 71%. |

6 Root Causes Behind Most Enterprise Data Lake Failures

1. Building Infrastructure Before Defining the Use Case

Teams go straight to technology selection. They choose AWS S3 or Azure Data Lake Storage before they can answer: “What business decision will this data power?” Without a specific, outcome-tied use case, the data lake becomes an expensive storage bucket. Data lands. Nobody queries it.

2. Skipping Data Governance at the Start

Governance is not a cleanup task. When teams skip it at the beginning, data quality drops fast. Duplicate records pile up. Metadata is missing. Data lineage is impossible to trace. Analyst confidence breaks within months and once it breaks, it takes longer to rebuild than the original project took to build.

3. Schema-on-Read Without Any Guardrails

Schema-on-read stores raw data now, applying structure later is a real and useful pattern. The problem comes when teams use it as a reason to dump data with no plan at all. Without input validation and at least a minimal schema contract, data lakes fill with inconsistent files that downstream consumers cannot parse or trust.

4. Using the Data Lake as the Only Data Store

Data lakes handle high-volume, unstructured, and semi-structured data well. They do not replace data warehouses for structured reporting workloads. Companies that force every data use case into one architecture end up with poor query performance and users who revert to pulling data from source systems instead.

5. Keeping Business Teams Out of the Build

Data lake projects owned entirely by IT with no active input from finance, operations, or product almost always miss the mark. Engineers build what is technically correct. Business teams need what is practically useful. Those two things overlap, but they are not the same.

6. Underestimating Operational Costs After Launch

Cloud storage is cheap. Computers are not. Egress fees add up. Auto-scaling without budget guardrails can triple a monthly AWS bill in a single month. Many enterprise budgets plan for the build but not the run. Projects get shut down post-launch when the finance team sees the first invoice.

7 Strategies That Keep Data Lake Projects on Track

These come from direct project experience from builds that worked, and from the fixes we applied to ones that did not.

Strategy 1: Start With a Business Problem, Not a Platform Decision

Before you choose a cloud provider or a file format, write down the specific business question your data lake needs to answer. Not “improve analytics.” Something like: “We need to identify customer churn risk 30 days before it happens.” That single sentence shapes every architecture decision that follows.

A retail client in the Midwest had two failed data lake projects before Hexaview Technologies joined the third attempt. The first two started with cloud selection. The third started with the pricing team’s most urgent question. It went live in 14 weeks.

Strategy 2: Define Data Zones and Schema Contracts Before You Ingest Anything

A three-zone architecture raw, curated, and consumption gives your lake structure without blocking flexibility. Define what data lands in each zone, who owns it, and what quality checks must pass before data moves between zones. This is the minimum viable governance model. It takes two weeks to design and prevents months of rework.

Schema contracts do not need to be rigid. Even a simple agreement on field names, data types, and nullability rules for your top five data sources cuts debugging time significantly.

Strategy 3: Put Data Quality Checks Inside the Pipeline

Quality checks that run before data reaches the consumption layer catch problems early. Tools like Great Expectations, dbt tests, and AWS Deequ plug directly into ingestion pipelines. Set thresholds. Alert on breaches. Never let bad data reach an analyst’s dashboard.

| ❌ Without Pipeline Quality Checks | ✅ With Pipeline Quality Checks |

| Analysts find bad data in reports and stop trusting the lake | Bad data is flagged and quarantined before analysts see it |

| Debugging takes days with no clear root cause | Alerts point to the exact source field that failed |

| Engineering spends 40% of sprint time on data fixes | Engineering focuses on new pipelines and features |

Strategy 4: Deploy a Data Catalog From Day One

A data catalog tells users what data exists, where it lives, who owns it, and how fresh it is. Without one, people spend more time hunting for data than analyzing it. AWS Glue Data Catalog, Apache Atlas, and Alation are solid options for enterprise teams. The tool matters less than the discipline catalog every dataset as it lands, not after the lake grows large.

Strategy 5: Tag Workloads and Monitor Cloud Costs Weekly

Tag every workload in your cloud account by team and use case. Set budget alerts at 75% and 90% of monthly targets. Use AWS Cost Explorer or Azure Cost Management to review spend every week for the first three months after launch.

Teach your data engineers to write cost-aware queries. In Redshift Spectrum, BigQuery, and Athena, you pay per byte scanned. A query missing a partition filter can scan a full dataset when it only needs a week of data. That is a cost problem and a performance problem at the same time.

Strategy 6: Hold a Weekly Sync With at Least One Business Stakeholder

A 30-minute weekly sync with a business stakeholder during the build phase keeps priorities aligned. Not to get sign-off on technical decisions to know which data problems matter most this week. Business priorities shift. If the engineering team does not know about the shift, they spend sprint time building the wrong thing on schedule.

Strategy 7: Run a Readiness Assessment Before the Project Starts

Most failed projects skip the readiness assessment. A two-to-three week assessment checks four things: data source readiness (is the source data clean enough to ingest?), organizational readiness (do you have the skills in-house?), use case clarity (is the business problem specific enough to build toward?), and governance readiness (do you have a data ownership model?).

This assessment costs a fraction of what a failed build costs. It shows you where your gaps are before they become expensive.

What a Successful Enterprise Data Lake Looks Like

In early 2025, a US logistics company came to Hexaview Technologies with a four-year-old data lake that had stopped working for the business. Pipelines broke weekly. Data was stale. The analytics team ran 80% of reporting from direct database exports because nobody trusted the lake.

We ran a four-week assessment, rebuilt the ingestion layer with schema contracts and automated quality checks, deployed Apache Atlas as a data catalog, and moved the consumption layer to a lakehouse architecture using Apache Iceberg on AWS S3.

Twelve months later: pipeline reliability is above 99.2%. Analyst adoption of the lake went from 20% to 88%. Infrastructure spend fell 34% through query optimization and better partitioning. The analytics team builds reports directly from the lake instead of waiting on data exports.

None of that came from switching technology. It came from getting the process right.

Where to Start

If your data lake is not delivering, ask these three questions:

- Can your analysts find the data they need without asking an engineer?

- Do your pipelines run documented quality checks on every data load?

- Can you trace a number in a dashboard back to its source in under 10 minutes?

If any of those answers is no, you have found your starting point. These are process problems, and process problems have clear solutions.

Hexaview Technologies works with enterprise teams across the US on data lake assessments, architecture design, hands-on builds, and performance optimization. If you want a straight conversation about what your situation needs, reach out. We will tell you what we see, not what you want to hear.